The editors of Microwave Journal asked three of the leading test and measurement companies that are heavily involved in software defined instruments to weigh in on the future of RF/microwave test and measurement instrumentation. Experts from National Instruments, Agilent Technologies and Aeroflex contributed their views on this subject.

Redefining the Way We Solve Test Challenges

Mike Santori, National Instruments, Austin, TX

The role of software in test and measurement systems has changed dramatically over the past few decades. Today, software is the most critical core technology in modern, high performance measurement systems because it is the only thing that can abstract the fundamentally growing complexity of those systems. However, simply running software on computer processors is not enough. The most challenging applications are not possible without engineers that can use software to specify and design the behavior of their own instrumentation. This ability to create software designed instruments is at the heart of a revolution that is taking place in RF instrumentation and, more broadly, in the general test and measurement industry.

In the Beginning – Automating Measurements

The role of software in test and measurement systems has advanced steadily since the 1970s when IEEE-488, also known as GPIB, standardized the interface for programmable instrumentation. Up to that time, taking measurements was largely accomplished with benchtop instruments, a pencil and a notebook. There were a variety of different proprietary instrument controllers and interfaces available, but their capabilities were rudimentary and used more by advanced users.

The role of software in test and measurement took a huge leap forward in the 1980s, with the introduction of the original IBM PC and the first National Instruments (NI) GPIB interface board. With the use of PC software, engineers could use general-purpose PCs to create automated test systems that reliably and repeatedly acquired measurements, analyzed those measurements and presented results that could be shared widely.

From Instrument Control to Measurement Platform

In the later 1980s and into the 90s, a new concept of “virtual instrumentation” began to take hold in the test and measurement world. This concept revolutionized the role of computers and especially software in test and measurement systems. Rather than viewing a PC as simply a computer for automating measurements with GPIB, virtual instrumentation used the computer itself as a measurement platform. Moore’s Law ensured that the processing power of a PC quickly outstripped the technology used in stand-alone instruments. Further, computer memory, storage capacity and graphics display capabilities far outpaced that of traditional instruments. Thus, a general-purpose PC quickly became a better computing platform than a traditional instrument.

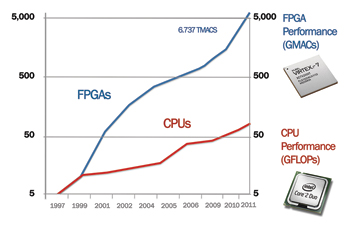

Figure 1 FPGA is growing at a rate that exceed CPUs.

Two critical elements were required to enable a virtual instrumentation system to meet and ultimately exceed the capabilities of traditional instruments: modular measurement hardware and software. On the hardware side, early computer plug-in boards offered fairly low-quality measurement capabilities compared to benchtop instruments, which relied on proprietary data converters. Demands of broad markets, such as consumer audio and wireless infrastructure, drove the development of off-the-shelf data converters that could perform high quality measurements when used on computer plug-in boards. Computer-based measurements took a big leap forward with the development of measurement-specific computing platforms, especially PXI (PCI eXtensions for Instrumentation), which was developed in the 1990s. PXI combines PCI computer technology with instrumentation-specific timing and synchronization capabilities. Soon, PXI virtual instruments were solving some of the most challenging measurement challenges, including high-performance RF measurements.

Yet, it was software that really made virtual instrumentation possible. Not only was software required to acquire, analyze and present measurements in a PC environment, but it had to do so in a sufficiently abstracted manner. In essence, abstraction software was required to enable engineers and scientists to efficiently solve their test and measurement challenges, without having to be experts in computer science and architecture. First released in the mid-1980s, NI LabVIEW software was one of the leading solutions for virtual instrumentation software and started the trend toward software playing a central role in modern test and measurement systems.

Enabling Software Designed Instrumentation with FPGAs

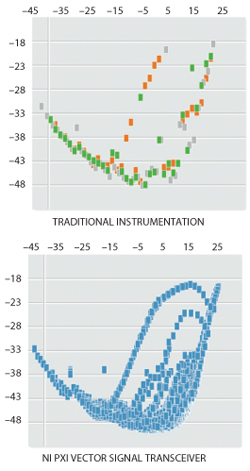

Figure 2 The NI PXIe vector signal transceiver benefits from FPGA.

The next major step in measurement capabilities is being enabled with the inclusion of FPGA-based measurement hardware. Looking forward to the future, it is important to consider that “instruments” in the traditional sense are no longer single-function measurement devices, but have instead become measurement systems. In addition, engineers are looking to instruments not only to test devices, but also to design and prototype much larger systems.

Field Programmable Gate Array (FPGA) is a key technology that is bringing new levels of performance to next-generation instrumentation. FPGAs offer substantial processing power (see Figure 1). With the inclusion of FPGAs, there is now a software-based capability to push measurement functions deep into the hardware itself.

Many of today’s RF instruments already benefit from fixed-functionality FPGAs to execute tasks such as flatness correction, ADC linearization, IQ calibration and digital down conversion. Software designed instruments, such as the vector signal transceiver shown in Figure 2, benefit from FPGA technology in an entirely new way because the FPGA is available to the user for customization. For example, moving the instrument control and decision making from a PC to an FPGA can dramatically reduce measurement time in complex measurement systems. Also, this capability combined with advanced FPGA-based signal processing will enable instruments to function in a wider range of embedded applications as well.

System Design Software – The Key to Software Designed Instrumentation

Figure 3 The NI PXIe vector signal transceiver using FPGA improved the measurement performance 200 times.

The right system design software tool is essential to pull together the computing and measurement technologies in today’s modular hardware. From its beginning as instrument control software, LabVIEW, for example, has evolved to be a comprehensive system design platform that allows engineers to create complex, high performance measurement systems. Engineers can use a common set of tools and languages to target applications on both processors and FPGAs, alleviating the need to know different languages and tools. It provides a higher-level abstraction to work at the system level, while also enabling engineers to create lower-level optimizations to address very high performance or complex requirements.

Multi-Mode RF Device Characterization

Faced with testing a new 802.11ac product, Qualcomm Atheros had to test its device under more operating conditions than ever before, resulting in more than an order of magnitude increase in measurement complexity. Using an FPGA-based vector signal transceiver and software, the com-pany was able to design a test system that synchronizes digital DUT control with RF measurements. The resulting test system reduces overall test time by more than order of magnitude and enables engineers to observe device behavior in multiple operating modes.

As seen in Figure 3, the traditional test instrumentation was used to obtain an iterative set of measurements. Because the measurement time was very high, the test engineers had to choose a subset of operating points to characterize, resulting in essentially a “guesstimate” over the device’s operating characteristics. With traditional instrumentation, approximately 40 points of meaningful WLAN transceiver data were collected per iteration.

However, by switching to an FPGA-based instrument approach, the company improved measurement performance by 200 times, enabling the engineers to acquire all 300,000 operating modes in a single test sweep. The resulting characteristic curves, shown in the bottom of the figure, showed much more detailed information about the device. The speed increase of the sytem triggered full gain table sweeps to acquire all 300,000 points.

Instrumentation in Embedded Applications

A second class of applications for software designed instrumentation is embedded communications and signal processing. While measurement devices have traditionally been thought of as instruments, modular, software designed instrumentation allows engineers to use RF instrumentation in embedded applications as well. Today, a growing number of engineers are using modular PXI instruments for embedded applications, like spectrum monitoring, passive RADAR systems (see Figure 4) and even communications system prototyping and software defined radio. For more information, please read our January 2013 article on a new passive radar system developed by SELEX at www.mwjournal.com/aulos. These applications require instrumentation to be increasingly smaller, modular and have better access to deterministic signal processing targets. Communications system design software must be able to abstract increasingly complex systems, enabling engineers to implement existing and new communications algorithms and deploy those algorithms on processors and FPGAs.

Figure 4 Complex systems, such as a passive radar system, are designed and deployed with NI LabVIEW software and NI PXI hardware embedded in the final system.

Looking Toward a Future of Converged RF Design and Test

Software designed instrumentation blurs the line between design and test like never before. One of the more intriguing opportunities will be the ability to share IP between design and test, whether that IP runs on a processor or an embedded FPGA. Using system design software, engineers will be able to use the same tools to create a new communication protocol and move that protocol to FPGA-based hardware for prototyping. Today, this is very challenging because of the disparity between the mathematics software used to create the algorithms and the design tools used to implement those algorithms. Advances in higher level synthesis and integration of multiple design models of computation will be required to fully enable engineers to achieve this seamless transition between design, implementation and test.

As a final thought, it is interesting to note that software in test and measurement has come a long way from its beginnings as simply a vehicle to automate a set of stand-alone instruments. Instead, software has become the heart of the instrumentation itself, enabling instruments to solve more difficult problems in both measurement and system design. In effect, automation is now an embedded capability of the system that is required to meet the complex measurement requirements that engineers face. Today’s software designed measurement systems are just the first generation to deliver what are sure to be game-changing RF design and measurement capabilities for a long time in the future.

The Future of Software Defined Instrumentation

Jean Dassonville and Phil Lorch, Agilent Technologies, Santa Clara, CA

Today’s RF wireless and microwave design/manufacturing engineers face emerging test challenges driven by increasingly complex modulation schemes, an ever growing number of communication standards, wider information bandwidths and new frequency bands and applications. Complicating matters, they often need to build integrated radios or other subsystems that work across analog (RF and IF) and digital (for baseband data processing) domains.

The ultimate goal for these engineers is to turn their ideas into validated products as quickly and reliably as possible. In order to achieve this goal, they expect their test equipment to provide enough flexibility to accelerate measurement insight into new and complex design problems and support the evolution of communications standards, while also maintaining a high level of measurement integrity (accuracy and traceability). Using this type of equipment, engineers can be assured their measurements will be truly reliable and provide a sound basis for good decision making in R&D and production (shipment-readiness decisions).

Figure 5 Simplified block diagram of Agilent's PXA vector signal analyzer.

Flexibility, Performance and Measurement Integrity

When it comes to RF and microwave instrumentation, test and measurement (T&M) equipment architectures usually combine physical hardware circuitry for high-quality RF signal generation and analysis, with ASICs and reconfigurable logic (FPGAs) that perform necessary digital signal processing (DSP), embedded firmware and application software. Figure 5 shows a simplified block diagram of a vector signal analyzer, highlighting its key hardware and software elements. Together, these hardware and software elements define the instrument’s capabilities and functionality. Each element of the instrument’s block diagram contributes to its overall measurement accuracy, performance, speed, flexibility and ultimately, its level of application insight. As a result, instrument designers and system architects often spend a great deal of effort leveraging decades of measurement experience to fine-tune the interoperation of these elements. By doing so, they are able to optimize a given instrument’s flexibility and performance over a wide range of conditions, while maintaining measurement integrity (see the sidebar below, “Defining Measurement Integrity”).

The combination of hardware, reconfigurable logic, DSP and firmware enables new levels of measurement performance. With accurate models of an instrument’s analog, RF and IF paths for example, embedded digital signal processing engines can perform real-time corrections and compensate for signal degradation, improving the measurement accuracy and flatness of wideband, digitally modulated communications or radar signals. Because of this, T&M manufacturers are now marrying these hardware and software elements to improve performance, while at the same time ensuring the instrument’s internal complexity is hidden, allowing RF design and test engineers to focus on their own design tasks and applications. Some RF instruments now include internal, field-upgradeable PC modules that enable connectivity to the design environment and are flexible enough to support evolving standards, new CPUs and additional memory in support of new, more demanding applications.

New Levels of Flexibility and Connectivity

In some cases, design and test engineers want to access an instrument’s internal reconfigurable hardware resources and embedded firmware so they can customize it to their own needs. They like the idea of flexibility and want to benefit from it, but they do not typically want to deal with the added complexity, time or effort required to build new measurements from the ground up. They may also have some of their own functional measurement intellectual property (IP) that they wish to incorporate for testing purposes.

To address this emerging demand for more flexibility, instrument manufacturers have two distinct strategic options. The first option maximizes instrument flexibility through access to its internal resources by exposing and shifting the complexity of the instrument’s design toward the test engineer. Without a lot of validation and further testing against known reference standards however, this sort of “instrument disaggregation” can negatively impact overall measurement integrity. It lacks traceability to standards, guaranteed specifications and a well-defined metrology process to back up the measurements. Consequently, the test engineer is forced to spend more time developing basic instrumentation, as well as the test, and then needs to verify it before any actual testing work can take place.

Defining Measurement Integrity

As unique as any RF and microwave test situation may be, measurement integrity is crucial. What is measurement integrity? Quite simply, measurement integrity focuses on producing consistent results, test-to-test, day-to-day, with respect to the metrology and traceability standards and delivering the right level of performance (that is accuracy and measurement range), given speed requirements, to help design and test engineers understand the device’s real behavior and make better decisions.

Moreover, as the engineer’s test application becomes more hardware specific, any newly-developed measurement IP becomes difficult to reuse on other new test platforms with different reconfigurable logic or electronic modules. This problem is similar to one that occurs with software programming. For example, on one hand, programming in high-level languages, such as C and C++, enables code reuse across multiple platforms. On the other hand, programming in Assembly Language enables more flexibility and faster execution, but is usually more complex and the resulting design becomes very dependent on both the hardware and system architecture.

The second strategic option that instrument manufacturers may employ couples flexibility with a clear focus on accelerating measurement insight and maintaining measurement integrity. Unlike the first option, this approach keeps the complexity hidden from end-users, leaving them free to focus on their own design tasks and overall workflow.

This approach is best achieved through an integrated portfolio of design and measurement building blocks. Agilent Technologies’ strategy, for example, provides users with an integrated design and measurement environment that includes both hardware (such as benchtop and modular form-factor instruments) and software building blocks. The latter encompasses external software like the 89600 VSA for signal analysis or Signal Studio for signal creation, as well as embedded instrument firmware such as the X-Series advanced measurement applications. These hardware and software elements interoperate seamlessly with Agilent’s electronic design automation (EDA) tools such as Advanced Design System (ADS), GoldenGate and SystemVue and also work with third-party software, like Visual Studio and MATLAB, to enable construction of new application-specific measurement solutions.

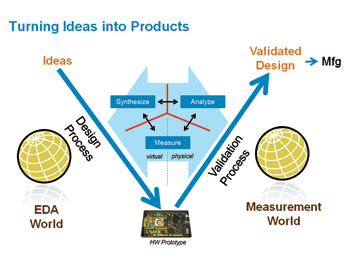

Figure 6 Bringing the EDA and measurement worlds together.

Quickly turning an idea into a shipping product requires a true closing of the traditional gaps between design, validation and final manufacturing test and can be accomplished by tightly integrating solutions for design automation with test instruments for performing highly accurate measurement and test. Figure 6 illustrates how bringing the EDA and measurement worlds closer together allows engineers to more quickly turn their ideas into validated, shippable products. To better understand this concept, consider an analogous situation that has occurred in the PC industry. In the early days of this industry, open PC architectures and operating systems enabled individuals to build customized PCs by selecting from a variety of CPUs, hard drives, graphics accelerators, operating systems and finally, custom application software. However, this customization came at the expense of added complexity and forced the user to test his or her custom-built PC after putting all these components together.

In contrast, a more integrated strategy, focused on key T&M applications, enables an easier user experience. In the PC realm, using pre-built computers, smart mobile and entertainment devices and cloud storage, individuals can easily customize their own user experience and then seamlessly share data between each one of their devices with little to no worry or hassle. In the RF and microwave design realm, an integrated “pre-built” measurement environment yields a similar benefit. The building blocks of the design and measurement world “just work.” They interoperate seamlessly, leaving the engineer to focus on his or her design and testing tasks, rather than having to build and verify measurement software or hardware.

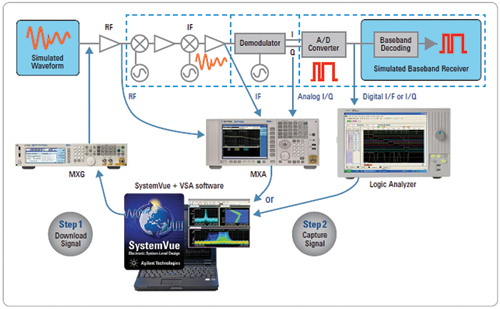

Figure 7 Modulation measurements can be performed with RF signal analyzers and logic analyzers.

Evolving Instrumentation

Market and technology forces will keep driving engineers and designers to demand faster measurement insight through flexible and scalable design and measurement solutions that span RF, analog and digital domains, regardless of the instrument form factor. One of the main drivers for this trend is the increasing integration of RF, microwave, analog and high-speed digital technologies inside today’s newly designed devices. As an example of this cross-domain integration, consider modulation quality measurements, which often need to be performed on digitized IQ data captured with a logic or protocol analyzer. As most wireless communication designs include analog and digital paths, modulation measurements can be performed with a combination of RF signal analyzers and logic analyzers integrated with software (see Figure 7).

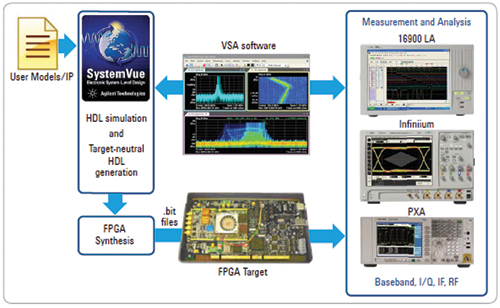

Figure 8 Software such as SystemVue provides modeling analysis and measurement integration.

Solutions, which consist of integrated design and measurement building blocks, can address these challenges by bridging the virtual and real world to integrate the product development workflow from simulation to prototyping and validation. Initially, only a small portion of the overall block diagram will actually be available. Software provides modeling analysis and measurement integration that enable engineers to quickly move ideas to proven, real-world hardware. Here, design and integration with FPGAs and RF is shown for a software defined radio development workflow. Agilent’s RF workflow environment extends and builds on the hardware and software building blocks with an integrated design and measurement approach to address emerging test challenges (see Figure 8). In the early stages of a new design project, more of the system elements will need to be simulated before the projected system performance can be ascertained. Engineers may even have some of their own IP that they want to exercise, but with no complete radio available (that is with RF up or down conversion), they will be unable to test it unless an integrated approach that involves simulation of the missing pieces is available. As more of the entire system design is realized in the form of prototypes, more physical measurements can be performed and compared with simulated results and vice versa.

Going forward, the vast majority of designers will expect the same measurement integrity that exists in today’s instruments, but with the flexibility to support new communication standards, wider modulation bandwidths and possibly even higher frequencies. An approach that integrates design and measurement building blocks provides an ideal means of answering this need. Realizing this vision, however, will require instrument vendors to continue developing engineer-centric integrated measurement solutions that combine the key building blocks previously described: instruments (in a variety of form factors), instrument firmware and application software, as well as EDA tools. The benefit of bringing these building blocks together is two-fold: enabling higher efficiency and ultimately, faster time-to-market for today’s RF and microwave system designers.

Maximizing Return on Test Equipment Investment

Dave Hutton, Aeroflex, Plainview, NY

The market for RF and wireless test and measurement is a particularly demanding one, requiring instruments to be flexible and to provide both a long term return on investment and low overall cost of ownership. The almost ubiquitous use of software-defined radio (SDR) technology in LTE and 3G test systems has proved crucial in meeting these demands, enabling the rapid addition of support for new 3GPP features and new radio technologies, while providing the capability to test new telecommunications technologies and enhancements when required by the market. PXI modules are often used in wireless and RF test products to allow a common hardware and software platform approach, which includes hardware modules and reusable software building blocks. The functionality and applications of these test instruments, whether stand-alone or modular instrumentation, are determined and defined entirely by PC-based software.

Flexibility

“The SDR approach meets the test needs in both R&D and manufacturing for network infrastructure manufacturers, network operators and handset manufacturers,” said Evan Gray, product and marketing director at Aeroflex. “Customers want the same test equipment to support multiple technologies, new radio technologies and enhancements to existing ones — for example from HSPA to DC-HSPA, from LTE to LTE-A, or a combination of LTE and

W-CDMA technologies — and they do not want to change hardware platforms to achieve this.”

For R&D applications, SDR enables a single investment in a platform to keep up with rapid changes in the standards. For manufacturing customers, it enables straightforward upgrades to new radio standards and protects the investment in test infrastructure. The ability to upgrade in situ and to convert between testing different technologies on the same platform are significant advantages for a manufacturing line.

Customer-specific modifications to modular test equipment are relatively easy to make, by providing the capability to access the APIs at different levels and thus to integrate the system to an appropriate level of functionality to suit their particular requirements. The software defined approach also allows the customers to write their own analysis and generation tools, by enabling the digitizers and generators to produce or load IQ data directly.

The software defined approach means that the lifetime of the instruments is extended and the costs of upgrading for future radio technologies are reduced, so that the customer has the benefit of a low total cost of ownership as well as a future-proof test system. Without SDR, the cost of supporting multiple technologies would be much higher, as additional hardware platforms would be required for each technology. In many software defined instruments, the measurement software can run on commercial PCs, enabling users to benefit from continuing improvements in low-cost PC processing power and data storage technology and test software can be upgraded as easily as conventional software updates.

Enabling Technologies

The use of digital signal processors, microprocessors, standardized PXI modules and FPGAs, underpins both general purpose RF test equipment and application-specific wireless test instruments. PXI modular equipment forms the backbone of much of today’s software defined instrumentation. Whether it is sold in modular form to OEMs developing their own test systems, or used in-house by test and measurement vendors who may be producing either one-box solutions for R&D test or sophisticated production ATE systems, almost all test equipment on the market today is essentially modular. The user benefits from the assurance that the performance will be the same, whether represented as a module or as part of an instrument. The PXI modules generally use FPGAs for local control, sequencing and generic DSP, along with high bandwidth DAC and ADC, allowing the majority of the control, analysis and flexibility to be defined by software running on the controlling PC.

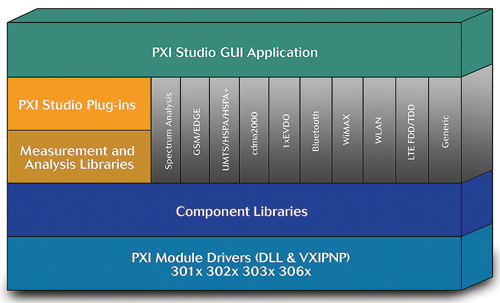

Figure 9 PXI software suite featuring modules for a wide range of communication standards that can be readily added or upgraded.

Figure 9 shows the basic architecture of a PXI software suite that allows mix-and-match modules to be used and readily updated to cover all the latest communications standards and device types. This modular approach to the software enables a single integrated user interface (UI) across all of the compatible PXI modules. It is a flexible application that provides a single UI to whatever arrangement of source/analysis modules are connected to the PC bus, either to one or to a number of vector signal analyzers, vector signal generators or both. Although it may not be possible to provide software for every test scenario, the test cases that are most in demand and especially those that require coordination of stimulation and response, can largely be covered.

The flexibility of RF hardware is the real key to allowing an instrument to be fully software defined and it is in this area that the real advances over the next few years are likely to take place. The hardware modules need to include flexible wide-tuning, wide-bandwidth transmitter and receiver blocks, coupled to flexible baseband processing hardware. It is important that the bandwidth of the radio hardware is adequate to cover all the frequency bands to be tested, in order that the other parameters can be selected entirely by the software. After several years of development, RF MEMS technology is maturing and, in the near future, Aeroflex expects low insertion loss MEMS switches to make it easier to switch seamlessly between different bands. Active duplexers will provide the frequency agility that is needed to deal with fragmented spectrum. The development of programmable RF will bring programmable bandpass filters and will allow wideband LNAs and power amplifiers to be deployed in future software defined instrument platforms.

Transmitters and receivers in signal generators and analyzers need to be able to cope with a wide range of crest factors for the different communications standards, as well as the broad operating frequency range and signal bandwidth. This requires tightly defined RF leveling capability, the ability to closely control I/Q modulation bandwidth and orthogonality and the integrity of the arbitrary waveform generator, which needs a high sampling rate to produce all the required waveforms. Also crucial is the ability to calibrate the instrument to achieve a good EVM by achieving tight control of amplitude and phase balance and DC offsets.

Market Trends

Among the important trends in the market are the new communication standards in development or in the early stages of deployment, such as WLAN 802.11ac and LTE-A, which require increased bandwidth. Equally, the trend over the last few years has been for products such as phones, tablets and routers to incorporate multiple radio technologies. Test instruments need flexibility and scalability to meet these requirements and the ability to upgrade and keep pace with standards will keep software defined test instrumentation at the leading edge of the market’s demands. Customers also continue to demand shorter times to market for new technologies, which increasingly favors a software defined approach, since it can be too time-consuming and expensive to design specific hardware solutions.