This application note will be posted in five parts, from September 8 to September 22. You can check this spot every Tuesday and Thursday for updates, or download the entire app note here.

On September 1, Keysight Technologies released the industry’s first handheld analyzers with real-time spectrum analysis (RTSA) up to 50 GHz. For today's more distributed and complex communication networks, Keysight FieldFox handheld analyzers are the world's most integrated and precise analyzers — the first to deliver benchtop accuracy up to 50 GHz in a portable, rugged package. Now, FieldFox is going further, with RTSA.

FieldFox RTSA software, Option 350, is designed for engineers and technicians performing interference hunting and signal monitoring, specifically in surveillance and secure communications, radar, electronic warfare and commercial wireless markets.

With the widespread increase of wireless technologies, interference over wireless networks is on the rise, which is impacting network quality. Traditional spectrum analyzers can capture slow changing signals, but new wireless technologies are “bursty” in nature with signals that quickly come and go. This makes it difficult to capture and pinpoint the location of interfering signals.

RTSA can capture bursty signals in real-time, which significantly improves the ability to detect them. By capturing a range of signals, FieldFox’s new RTSA option provides a composite view, allowing easy identification of the source of interference.

FieldFox RTSA allows users to measure

- from 5 kHz to 50 GHz,

- in real time to capture every signal,

- small signals once masked by stronger signals,

- without gaps, with gapless capturing techniques.

FieldFox RTSA reveals and separates captured signals with more insight and provides

- pulse detection as narrow as 22 ns and 63 dB of spurious-free dynamic range,

- one integrated, lightweight unit, so users don’t need to carry multiple instruments,

- easy upgrades via software license keys,

- choice of 16 models (combination and spectrum analyzer models) from DC to 50 GHz:

- combo models include spectrum analyzer, VNA, CAT, RTSA, more,

- all models offer benchtop precision and are MIL-Class 2 rugged.

This application note discusses practical strategies to overcome RF and microwave interference challenges in the field using RTSA. It discusses the different types of interference encountered in both commercial and aerospace/defense (A/D) wireless communication networks. The paper talks also about the drawbacks associated with traditional interference analysis and includes an in-depth introduction to RTSA and why this type of analysis is required to troubleshoot interference in today’s networks with bursty and elusive signals.

With the increase of wireless technologies in communication networks, one inherent challenge is interference. Regardless of the type of network, performance is always limited by the noise level in the system. This noise can be generated internally and externally.

The level of interference management determines the quality of service. For example, uplink noise level management of an LTE network can dramatically improve its performance. Proper channel assignment and reuse in an enterprise wireless local area network (LAN) assures the planned connection speed, and optimized antenna location/pattern in a satellite earth station contributes to the reliability of communication under all weather conditions.

In order to detect demanding signals and troubleshoot network issues, an RTSA capability is necessary for field test. In this paper, we look at interference in various networks, explore RTSA technology, its key performance indicators and strategies to troubleshoot and tackle radar, EW and interference in communication networks.

Part 1: Review of RF and microwave interference

In commercial digital wireless networks, the key challenge is to provide as much capacity as possible within the available spectrum. This design goal drives much tighter frequency reuse and wider channel deployment. Because cell sites are so close to each other and base stations are transmitting at the same time, this creates much a higher noise level on the downlink (direction from the base station or base station to the mobile). The higher noise level on the downlink at the mobile antenna triggers the mobile to increase its output power to overcome the higher noise level. In turn, this leads to increased uplink (direction from mobile to BTS) noise level at the base station antenna. The higher level of noise at the BTS antenna will reduce cell site capacity. These are considered to be network internal interference.

In addition to internal interference, external interference is becoming more and more prevalent due to tight frequency guard bands between network operators, poor network planning, network optimization and illegal use of spectrum.

LTE network interference issues

The LTE network is a noise-limited network. It has a frequency reuse of 1, which means every cell site uses the exact same frequency channel. In order for an LTE network to work properly, it must have a sophisticated and efficient interference management scheme.

On the downlink, LTE base stations rely on channel quality indicator (CQI) reports from the mobile to estimate the interference in the coverage area. CQI is a measure of the signal-to-interference ratio on the downlink channel or on certain resource blocks, and it is a key input for the base station to schedule bandwidth and determine the throughout delivered to mobile. The interference is an aggregation of noise generated inside the cell site and interference coming from external transmitters. If there is external interference on the downlink, it will drive CQI lower and will drive more retransmission of data, which in turn decreases network speed. Downlink interference is one of the most challenging situations to deal with, because there is not direct feedback from the base station to indicate that interference is present.

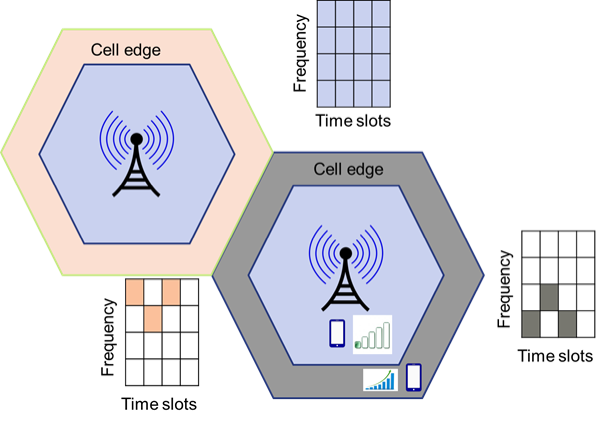

Precise power control plays a very important role in LTE interference management because the serving cell and neighbor cells share the same frequency channel. The network needs to minimize interference at the edge of the cell and, at the same time, provide sufficient power to the edge users to get good service quality. An LTE base station provides full spectrum at lower power in the center of the cell and allocates fewer resource blocks (subcarriers) at the edge of the cell but delivers more power (see Figure 1). This approach improves overall cell throughput and minimizes the interference.

LTE control channels are located at the center of the channel, with a bandwidth of 1.08 MHz regardless of the system’s channel bandwidth. Key downlink control channels are the primary sync, secondary sync and broadcast channels. The primary sync and secondary sync channels are used to get the mobile to synchronize with the cell and to start to decode system information. If there is narrowband interference close to the center of the LTE channel, it can have a major impact on the mobile’s synchronization process. Sometimes it can even block the whole cell. For example, some analog 700 MHz FM wireless microphones can easily block an LTE cell; these wireless microphones are banned by the FCC.

Microwave backhaul interference issues

About 50 percent of the world’s base stations are connected to backhaul with a microwave radio. With recent developments in Gigabit Ethernet over microwave, microwave radio is very attractive as a backhaul option for 4G/LTE deployment.

Just like any radio technology, interference is always part of the network. For microwave radio networks, the primary interference really comes from the areas discussed below.

Reflection and refraction

In a mobile network, microwave radios are widely used for point-to-point connections. The radio can be deployed in an urban area and when its transmission path is blocked, the signal will be bounced back and can cancel a portion of the energy towards the remote receiver; or the signal could be bent to change directions (i.e., refraction). Both cases will create system outage.

Interference on unlicensed bands

In recent years, point-to-point Ethernet microwave links have been widely used for mobile backhaul; they are convenient and lower cost. Point-to-point microwave links operate either on licensed or unlicensed bands, such as 5.3, 5.4 and 5.8 GHz. In unlicensed bands, more interference-related system outages will occur. These bands are very close to the frequencies used by 802.11n and 802.11ac WLAN, leading to interference between these two systems. For example, when WLAN operates near a 5.8 GHz microwave radio, it can raise the power level at the microwave radio receiver, misleading the microwave radio to reduce its transmit power on the link; in turn, the radio will not transmit sufficient power to maintain the actual signal level needed, so an outage occurs.

End of Part 1. Read Part 2.