Special Report

Test Enables High Volume Manufacturing

Ernest Rejman

Contributing Editor

|

|

|

Fig. 1 A concept of a device capable of uploading and downloading data and video, and offering voice communications. Source: Nokia. |

A nightmare looms on the horizon for test manufacturers as well as their end-users, the device and product manufacturers. In 2002, 300-mm-wafer production lines will begin working in earnest, and if anyone thinks the semiconductor industry had a difficult time in transitioning to larger wafers and coping with increasingly smaller critical dimensions, compared to what the high volume test industry faces, they ain't seen nothing yet.

As the wireless network infrastructure progresses and bandwidth ceases to be a problem, the mobile communications market will explode with any number of PDAs and other devices, capable of uploading and downloading data and video, and also offering voice communication. All of these will require advanced test methods. Figure 1 illustrates a concept of such a device.

PCs and Test

PCs have transformed the test landscape. They are increasingly integrated into instrumentation, and test suites today seem more PC - with similar screens, keyboards and mouse - than test system. This is partly a result of the requirement to test different abbreviated lifecycle new products, such as cellphones, by having to match the test suite's development to the new product's requirements. This requires flexibility and programmability, particularly with the trend toward outsourcing test, because contract manufacturers demand flexibility.

Andrew Bush, manager of product development for the Test Instruments Group at Keithley Instruments, admits that PCs drive everything and have raised test expectations. "Our modular integrated system looks like a PC," he stated. Rick Robinson, general manager of the Measurement Component Technologies Center for Agilent Technologies, agrees and expects this trend to grow. "Test and measurement must more aggressively adopt computer and network standards," he said.

Last April, Tektronix and Wind River Systems announced a partnership to integrate Tektronix's TLA600 and TLA700 series logic analyzers with the embedded software maker's visionICE and visionProbe on-chip debug software. A main mover for this, according to Peter Dawson, vice president and general manager of Wind River's hardware and software integration business unit, is that, "The real-time nature of embedded systems requires the visibility offered by logic analyzers."

He added that the hurdle was presenting analyzer data in a format familiar to the software engineer. Industry observers indicate that these kinds of relationships must continue to grow if technology and test cost requirements are to be met. Particularly as ICs and the systems they go into become more complex, the software involved in running them and providing more functionality to the finished consumer products, such as cellphones and PCs, also grows in complexity, and both hardware and software must be debugged together.

BIST, Software, SOCs and Cost

The gap between chip speed and tester speed has always been an issue - next-generation chips tend to be tested with current-generation testers. Automated test equipment (ATE) systems today can test at a maximum of about 1 GHz, while Intel's Pentium 4 races at up to 1.5 GHz, with faster devices in the works.

Although accuracy is an issue at these speeds, a considerable hurdle in testing today's chips is not so much processing speed, but the system buses used to access them. These are notorious test bottlenecks, and have led the test industry and chip manufacturers to consider alternatives such as parallel testing and asynchronous operations.

Built-in self test (BIST), or design for test (DFT), is a practical solution for some speed and cost issues. BIST's main advantage is that the latest technology is automatically incorporated to test the chip, because the self-test is built into the device. Joe Jones, CEO of BridgePoint Technical Manufacturing, a contract test house, is skeptical. While DFT is important, he believes BIST has a limited future because it adds cost to the chip and takes up real estate. Although he admits that BIST provides chip performance data quickly and efficiently, he prefers a software solution. "Chips should be designed to enable them to receive software while operating at speed and output results," he said. "This could be done over a system bus, avoiding asynchronous operations." Texas Instruments has experimented with low cost testers to deliver software instructions into a device and determine response.

Manufacturers face a major problem with silicon on ceramic (SOC) testing. With each succeeding generation these ICs increase in complexity, and test equipment makers must cope with this situation, while keeping costs from going through the roof.

The problem is two-fold - there is the difficulty of accessing buried components, and the testers' reuse capabilities. Testers are limited by the bandwidth at the chip's pins, and reuse can be a problem because testers must treat each SOC as a new chip with a different set of pins. One chip could be low speed digital with complex analog, the next high speed digital and analog, and a third high speed digital with embedded memory and low speed analog.

It is possible to run high end test equipment at a 1 Gbit/sec digital data rate, and there are options for scan-testing embedded memories along with a suite of analog instruments. But if the system is designed to handle complex devices, it is wasteful to use it to test cheap, low end devices, and perhaps only eight percent of devices tested are high end - not nearly enough to keep the expensive tester busy.

Another manufacturing-related possibility is native-mode testing for high speed interfaces. Structural test could reduce the cost of testing a SOC's digital portions by putting more of the digital test functions on the chip itself. The chip is loaded with data at a slow speed, runs the test at a higher speed using the tester's clock and data is retrieved at a slower speed. While this avoids the need for an expensive digital tester, there are no standards yet to develop specifications for a structural tester.

Structural testing includes BIST, automatic test pattern generation and DFT methods. Scan test, a DFT method, gives probes access to the chip's interior via a set of scannable registers. But it is a serial method and not particularly fast. There are currently no clear analog test solutions for SOC.

BIST is attractive because it solves the problem of using testers one or two generations behind the device they are testing by providing the means on the silicon itself. However, a hurdle arises from the fact that sophisticated analog chips are being combined with digital, and test vendors tend to be experienced with digital or analog test, but not both. Currently, there is no digital or SOC tester capable of testing the RF/power front-end on a cellphone. Soon, two-chip phone devices will change to a single chip incorporating digital and the RF/power front-end, and there are no solutions to test this in a cost-effective way.

Application-specific and Outsourcing

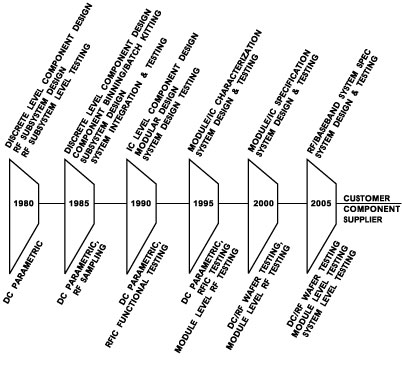

According to Walt Strickler, manager of the telecom business unit at Keithley Instruments, end-users want increasingly sophisticated test for the same or less cost. "There is increased pressure from some of our customers to provide more targeted, less general-purpose test equipment," he said, "and handset manufacturers are a good example." Strickler added that the opposite seems true of contract manufacturers. "They, too, want low cost, but demand general-purpose testing capabilities that will enable them to run; for example, a GSM, TDMA or CDMA handset on the same line." Regardless of the apparent conflict, the trend seems toward more application-specific testing instruments, increasingly tailored to individual needs, for less cost. This could impact the economies of scale. High volume manufacturing test trends are composed of a product and test manufacturing component. With device technology and product life cycles speeding ahead, high volume manufacturers often find themselves testing a next-generation product with last generation's platform, as shown in Figure 2 .

|

|

|

Fig. 2 High volume manufacturing test trends. Source: Agilent. |

Like many, Strickler believes that BIST has a place, but that cost pressures will not allow it on a large scale. "If cheaper ways are found to do test, it won't become widespread. IC manufacturers are increasingly trying to do more test on the wafer," he said. "They may do whatever BIST they can, but they're now doing considerable RF probing and even some functional test on the wafer. I see test at that level as the trend - kill problems before they reach subassemblies and the phones."

"The fact is that, not only in high volume manufacturing but any kind of manufacturing, from cars to cellphones, people would rather not test at all," said Dave Myers, vice president, Component Manufacturing Solutions at Agilent. "Every so often someone forecasts that testing is dead and that, for one reason or another, it won't be needed or done. Then designers produce something that's more complex and capable, and the cycle restarts. Test is dead, so long as we stop progress." At some point in time, and this is true for any device or part, less test takes place. Some devices like medium-performance switches, low and medium-performance capacitors and filters are no longer tested. Manufacturing and test have intertwined roles. As a product reaches maturity, the level of testing needed declines, but never vanishes. If test is unnecessary at the product level, it is often crucial at the device level. Figure 3 demonstrates the current role of test in manufacturing.

|

|

|

Fig. 3 Manufacturing test role. Source: Agilent. |

This extends to products like PCs. While components and boards are thoroughly tested, the PCs themselves are plugged in, turned on, and if they light up, they ship. This is about as close as it gets to a functional test, and reflects a trend whereby test value migrates to the components. "Components are becoming increasingly complex and have greater capabilities," said Myers. "With the PC there isn't much value added at the end of the chain - it's a plastic box, a circuit board or two, all put together at the lowest tech level. But if you go backtrack the value chain to where the microprocessor and the memory are designed and produced, these are not only heavily tested - they're hypertested." Much the same holds true for the wireless industry, where cellphones are pretty much slapped together and shipped. The value-add to the product are the few ICs and RF components, which are being tested more than before.

While cost of test is always a concern, with high volume manufacturing it becomes an obsession. Cost of test can determine whether a device is economically producible. "As engineers invent devices, we must come up with ways of testing them that aren't prohibitively expensive or time-consuming," said Myers.

Quality is an issue in high volume manufacturing test. "A few years back, 70 or 80 percent yield on components was considered darn good," recalled Myers. "Now, expectations, particularly for components, rise every year because yield is directly related to production cost, binding together test and yield."

There are still components that go into cellphones and basestations, where yield is surprisingly low - under 50 percent. In fact, it requires an investment in test to determine whether the yield is even at that level. This results from device complexity and fabrication process intricacies. The advantage of semiconductor processing, which is why everyone wants to turn every component and device into an IC, is predictability. Once the process is tuned, millions of die can be cranked out with small variations across them. This is not the case with many devices, particularly in the RF world, where technology is not advanced to the point where everything can go on a chip. Instead, thin-film or hybrid technologies are used, resulting in much more process variation.

In the semiconductor processing world, metrology - test - is not only becoming an integral part of the production process itself, but a controlling factor as well. Something similar is occurring in high volume testing in the case of tantalum capacitors, for example, and how they are produced and tested. Ways have been designed to inspect early in the process, rather than at the end. With most complex RF devices, however, it is not possible to do inspection along the way - they must be built, then tested.

This is why RF components created in fairly high volumes for handsets (such as resonator filters), which still require considerable manual labor to produce, will eventually be replaced with SAW and film bulk acoustic wave resonator filters, moving the more simple devices into an IC-like process, eliminating the tuning/test step. These devices would go into modules, with each module then tested and tuned, moving the tuning step further down the value chain.

"Rather than tuning each individual discrete component, you put several together and tune the end product," said Myers. "This reduces total tuning time. However, tuning and test and measurement requirements for complex modules are daunting. Imagine a module that has optical going in and modulated RF coming out. Doing stimulus response measurements on that device would be difficult. Today, you would do it with a number of instruments, join them with a PC and write some software. You could test the device, but it would probably be insufficiently cost-effective for volume production."

RF Test Considerations

"Currently, the demand for test is increasing," said Cheng-Juen Chow, manufacturing manager for Wireless Semiconductor Division (Penang, Malaysia), Agilent's largest manufacturing facility. "Before, we had devices that we just tested parametrically. Now, more RF testing and a final functional test are needed on most of our components." Chow believes that eventually much of the high volume testing will be incorporated at the back- and front-ends, to distribute the amount of testing and guarantee device performance as more integration takes place.

Like everyone in his position, Chow's aim is to minimize test. "It can be done with components," he pointed out. "There's little choice for modules, because you must be able to ensure the ultimate performance. In that case, test UPH (units per hour) is probably more important." Chow expects that over the next two years their test requirements will be to move testing as much as possible to the wafer level, to get good RF die moving into production.

David Vondran, the Microwave Measurement Division marketing manager at Anritsu, is trying to get his arms around not only passive but active devices as well. "This isn't easy because of the high volume aspect of these devices and the choices people make for RF IC testing," he said. Some solutions are expensive and require considerable skill to support, which is why many companies have outsourced their testing.

"Many of the 2.5G, 3G circuits manufacturers are playing with are complicated," said Vondran. "Short-term, they will want to do almost 100 percent testing on these. They will look at things like noise figure, IMD, gain and compression, possibly the circuit interface, and there's still wafer and package testing."

From a cost and technical resource perspective, the emphasis is on board and product level testing, according to Olav Anderson, expert in RF measurement technology, Ericsson Radio Access, Gävle, Sweden. "The most demanding tests are parametric measurements that must be carried out on the radio interfaces at the board and product level to ensure conformity to standards and customer requirements," he said. "It may sound strange that so much effort is taken so late in the product manufacturing cycle, but it is necessary, as many alignments are done by electronic calibration tables entered into the product at this stage." Many tests would be too expensive and time consuming to do at the component level. Even if done, they would not ensure the necessary confidence in parametric conformance and functionality. Although slightly more than 50 percent of the circuitry in a radio basestation (RBS) transceiver is digital, in most products it accounts for less than 25 percent of the total test cost.

For RBS products, the most challenging problem is getting enough dynamic range and bandwidth to enable measurements directly on the products' air interface. "We often must design expensive and bulky 'work-arounds,' such as filter boxes, just to gain a few more dBs to use the instrument to meet our needs," said Anderson.

|

|

|

Fig. 4 Future RF test requirements. Source: Agilent. |

Ericsson is concerned that in a few years traditional test methods will not be cost-effective and could become useless for test of emerging radio basestation products. "It's important that new instruments and methods be developed to meet the test needs of multiple carrier transmitters, receivers and multiple carrier power amplifiers," said Anderson. He added that in the case of a multicarrier, it would not be desirable to use eight or more traditional single-carrier signal generators to produce the signal scenario needed for the test. "A single generator is desirable, so instrument architecture must keep pace. Also, new linearization schemes like pre-distortion used on transmitters and MCPAs (multi-carrier power amplifier) will require more extensive calibration in production." RF test requirements are increasingly demanding. Test manufacturers must respond to the challenge of reduced package size and contact pitch, better accuracy and dynamic range at higher frequencies, thermal management for test fixtures and higher integration levels for increased functionality, as shown in Figure 4 .

Outsourcing in Action

Al Bondanza, manager of the San Antonio Engineering Group, Microwave Communications Division at Harris Semiconductor, one of the company's main manufacturing facilities, views outsourcing as a necessity. "We don't do any PCB manufacturing - we farm it out," he said. "We look to our PCB suppliers to provide us the different levels of test, depending on what the board is. For some they'll do in-circuit test, for others, functional test, and there are some in-between areas such as a boundary scan or a JTAG (joint test action group)."

When Harris brings in the PCBs, it does a functional test on most of them. "Then we do a system-level test, where we take the boards and make a radio, rack-and-stack them, and pass traffic through, with all the parts as it will ship to the customer," said Bondanza. "Because each of our radios is customized to the customer's requirements as far as capabilities, frequencies, etc." Once the radio is configured, testing is carried out at that level, and it mostly consists of calibration, and a burn-in cycle to stress them, because the power amps are pushed to the limit to ensure reliability.

Harris is using JTAG and boundary scan. "My goal is to get to a plug-and-play situation, where I can take any board, plug it into a rack and be able to ship it knowing that it will work," said Bondanza. "If I can plug everything into a rack, then plug the rack and see all green lights, I know we're good to go," he said. "We aren't far from that - we already have one system where every board has a controller."

Bondanza does not believe that future test requirements will be anything out of this world. "Over the last 10 years, the basic requirements haven't changed. A power amp is still a power amp. Customers want more power, that's a given, but this doesn't change the test parameters. You still want no distortion, for example. As we get into higher capabilities - two years ago we were doing T1, now we're doing OC3 and OC12 - and faster speeds, basic requirements won't change much."

A Mix of Capability and Cost

Craig Pynn, manager of corporate marketing at Teradyne, considers high volume a fascinating combination of technology and economics. "On the technology front it would be nice if we, the test industry, could get a hold of the technology three years before everybody else - then we'd be ready with test systems for the new technologies when manufacturers are ready to build products," he said. "It's always a ying-and-yang pull of how the tester deals with the technology."

Pynn recognizes that speed and resolution are the best ways to characterize technology. "Unfortunately, as speed goes up, cost usually follows. So the issue for a tester manufacturer is to deliver the right technical capability mix, while being told by the customer that he'd like to eliminate test altogether and that, short of that, he wants to do it as cheaply as possible." There are a host of variables involved, but it always boils down to minimizing cost of test, while adequately covering the technology of either the device or the board that's being built.

After almost three decades in the business, Pynn is still astonished that many manufacturers still do not consider test an added-value feature. "Test is value-added, particularly for those delivering very high end technologies, because then test becomes a competitive advantage," he said. "By high end I don't mean the product as much as the technology incorporated into it. For example, in the case of microprocessors, high end would be a Pentium 4, lower end would be an Athlon. Those moving to 0.10 mm processes will be more concerned with ensuring that the product has been thoroughly tested, because there's no other way to find out whether it's functioning right or not. Anyone working in something like copper, for example, will be willing to pay for big iron."

Test is an element of manufacturing cost. In a high volume environment like that of a Nokia, manufacturing costs must be minimized and test is an element of this. This is behind the emergence of structural test at the assembly, board or device level, essentially determining whether the device and/or board was built correctly.

"As test engineers, we have been trained to look at functionality, when in point of fact in a high volume environment the objective is to keep the process under control," said Pynn, adding that this view of test became a method of collecting data to ensure everything is under control. "If you're building boards for a cellphone, you're not worried about the design because it's stable. You're interested in how well the thing is being built, so you rely on structural test, which tells you whether the board and/or device is built correctly. Structural test is less expensive, but it isn't just a pass/fail situation. With PCBs, for example, it will diagnose structural faults. If you're building a board and the pick-and-place equipment is out of calibration resulting in some skewing in a particular device, you might use an automated optical inspection system to pick that up. You want to find out that you're putting on parts incorrectly before building a zillion of them."

As smaller, more complex circuits make an appearance over the next two years, test will face some hurdles. Test is primarily electrical, which means that a connection must be made with the DUT. As devices get smaller, the linewidths are smaller, the paths are smaller and the capability to make physical contact with the board becomes more problematic and expensive.

At that point, like the packaging, the board begins reacting as if it were an actual part of the circuit. Formerly clear-cut boundaries between what is a board, a module or a device are becoming blurred. Is a SOC a tiny board? One man's system is another man's component. The question becomes what is the repairable subassembly level. If the product does not pass the test, what is to be done with it? If it is a garage door opener being built in very high volumes, it may make economic sense to just throw it away. In that case, test would be done on a pass/fail mode.

A test engineer who declined to be identified complained that many companies do not clearly define their objectives and test strategy. "They focus on the equipment's details and specifications," he said. "In board test, people are enamored of the equipment, and suddenly you're in competitive battles between brands A, B and C. Then, when you ask a few questions, you may discover the guy hasn't defined his test strategies sufficiently, and is looking at the wrong piece of equipment. It's like not deciding what car you want to buy before going to the dealership. Knowing the test strategy, the objective and the economic equation are key."

By 2002, 0.13 mm processes will become mainstream and test requirements will turn topsy-turvy as these larger wafers' more numerous and highly complex dies, as many as 10,000 per wafer in the 200-pin range, must be tested individually and again in subassemblies before going into final products. Test equipment suppliers are scrambling to come up with new options, because traditional methods do little to reduce delay and speed the throughput of this new parts tsunami. With present technology, test could take more than a day per 300 mm wafer. The result of this on work flows and design and manufacturing is best left to the imagination.

ATE enhancements will solve some problems, with parallel test methods being applied to final test. However, simple extensions of today's methods will be insufficient to cope with the requirements of this next generation of manufacturing capability. Not only are new testers with enhanced capabilities required, but work flows and handling methods must be redesigned.