Everybody acknowledges that wireless communications technology has developed rapidly. It empowers our modern life, enables modern societies to operate efficiently, and has had a major impact on modern politics, economy, education, health, entertainment, logistics, travel and industry. In the last 20 years, wireless technologies have changed the world we live in. It seems as if life without modern wireless communications, as it existed before 1990, is difficult to comprehend.

Major breakthroughs and advances in communications are heavily related to the basic interests of humans. Nature has empowered us to talk and listen, leading to the demand for telephony. We are interested in information and want to share, requiring data communications. We like to collect and hunt, driving the demand for monitoring and sensing. Also, we like to steer and control within our environment, demanding control communications. Today we enjoy the power of telephony and data communications. Machine type communication and control is yet to fully enter the market. This article provides a recollection of our current state for motivating and sketching a vision of our future.

When reviewing today’s situation, we see a chronology of revolutionary applications and technologies that have shaped everyday life. First and foremost, the need for untethered telephony, and therefore wireless real-time communication, has dominated the success of cordless phones, followed by cellular communications. Soon thereafter, two-way paging implemented by SMS text messaging became the second revolutionary application. With the success of wireless local area network technology (i.e., the so-called “IEEE 802.11” standard), Internet browsing, and the widespread market adoption of laptop computers, untethered Internet data connectivity became interesting and ultimately a necessity for everyone. This phenomenon opened the market for wireless data connectivity. The logical next step was the shrinkage of the laptop and merging it with the cellular telephone, which evolved into today’s smartphone. We now enjoy access to the world’s information at our fingertips, in any situation and at any place.

The next wave is the connectivity of machines with other machines, referred to as “M2M.” During the past years we have seen a multitude of wireless M2M applications being deployed, for example information dissemination in public transport systems. However, the commercial success has been somewhat limited. Why? The previous revolutionary applications have been clearly driven by addressing a human need, whereas M2M, by nature, needs more analysis before we can understand the basic human need it serves. Identifying that need could cause a breakthrough.

Today it is accepted that M2M is developing, without knowing how to create the large market pull. This leaves us in a situation where a coarse analysis of driving forces behind data services over the last decades needs to be undertaken.

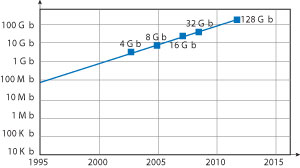

Figure 1 Moore's Law doubling single-chip memory capacity every 18 months leading to a 10× increase every five years.

The Wireless Roadmap

Over the years it has become evident that wireless data rates are continuously increasing over time. This is mainly driven by the need to transfer files of ever increasing size, as well as the rapid adoption and growth of streaming/podcast services. In this section, a quick review of data rate increases of wireless data applications (non-voice) over time is given, as this is seen to be the main driver of wireless technology development.

The International Technology Roadmap for Semiconductors (ITRS) shows for flash memories a history, as well as projection, of a factor of 10× in memory increase every five years, as seen in Figure 1.

Flash memory is seen as the most important storage technology for the majority of “wireless gadgets,” which require broadband connectivity. Devices that use flash memory include cellular (smart) phones, game consoles, cameras, camcorders, subnotebooks and e-books, as well as modern laptops. Hence, the storage size increase of flash memory directly drives the size and amount of data stored. As stored data needs to be moved from one device to another, storage increase also drives the need for communications bandwidth. Perhaps another perspective is the use of cloud technologies for storage. As more businesses continues to take advantage of the cloud, the demand for wireless bandwidth could potentially further accelerate wireless bandwidth and network capacity needs by several orders of magnitude over current usage.

Wireless gadgets, as previously defined, also need connectivity at different levels. USB is the transport media of choice, being an easy way of moving data quickly between two devices that are closely positioned to each other (approximately 1 meter apart). From USB 1.0 at 2 Mb/s, data rates have now increased to 4.8 Gb/s with the introduction of first USB 3.0 interfaces in 2009. Wireless USB is often considered as an alternative, in particular for connecting devices that have their own power supply and do not need the 2 W powering capability via the USB cable. For example, when downloading pictures from a digital camera to a laptop, both have batteries for operations.

Figure 2 The Wireless Roadmap.

So far, however, wireless USB has not been economically successful. The main reason being that IEEE 802.11 wireless LAN (WLAN) standards have also been providing increasing data rates, as can be seen in Figure 2. So far the difference in data rates between wireless USB and 802.11 is not large enough to make a noticeable difference in user experience. In addition, the cost difference for a chip set is negligible and the 802.11 protocol stack is well integrated into PC and cellular phone operating systems, whereas an 802.15 wireless USB protocol stack would require a whole new design effort with unknown engineering and debugging problems.

Therefore, even though WLAN systems are specified for a 10-meter range of operations and are intended for client-server applications in offices, hot spots or homes, they have also been able to eliminate the business case for wireless USB. The negligible speed difference between wireless USB and WLAN has virtually eliminated wireless USB’s market viability.

Cellular, on the other hand, has a very different business. The most important feature of cellular is to provide coverage, meaning reliable connectivity no matter where a customer is located and/or positioned. Once connectivity is available and a connection is established, the speed of data communications becomes the next challenge. With coverage and reliability sufficiently addressed, consumers are demanding higher data rates for cellular, as can be seen. Currently the next generation cellular, LTE, is providing data rates in the order of 36 Mb/s. This clearly fits very neatly in the projected 10× data rate increase every five years mentioned previously. Remarkably, the data rates provided by USB, WLAN and cellular all increase by 10× every five years; exactly at the same rate as the increase in size of flash memories according to the ITRS roadmap.

Figure 3 The Wireless Roadmap matching Moore's Law.

The speed difference of 100× in data rates between cellular and WLAN (see Figure 3) has, so far, created a large enough differentiator for both technologies to be able to succeed in the market place (see Clay Christensen’s “The Innovator’s Dilemma,” Harvard Business Press).

Cellular service providers now offer consumers femto base stations or “femto cells” in the home to improve cellular connectivity and coverage, ultimately providing access to a faster and more reliable cellular network. As small (femto) cells enter the home, and due to an improved link budget in this case, data rates in this heterogeneous network topology combined with future cellular technologies may deliver data rates approaching WLAN. This could create a situation where cellular may encroach upon the WLAN value proposition. However, due to the fact that cellular chip sets are typically more expensive than WLAN, in part because of the cellular technology high IP licensing costs, it is anticipated that WLAN will remain a dominant player in the market.

One question remains: “Is there a need for data rates beyond 100 Mb/s in the future?” Today, more than 50 percent of the data volume measured in cellular networks is generated by users’ increasing use of streaming applications. As high-definition 3D streaming with user-enabled vision angle control requires data rates in the order of 100 Mb/s, and users want quick downloads of typically above 100× real-time of multiple streams, we will see more than 10 Gb/s wireless connectivity as a future requirement. Obviously this does not lead to a need for a continuously sustainable very high bandwidth for one user over long periods of time. Instead, for example, 100 Gb/s data rates will be shared via the wireless medium.

Setting the Stage for LTE and Beyond

Cellular technologies will continue to be driven by demands for not only reliable coverage but also high data rate access. Cellular service providers are introducing 4G LTE with a new Orthogonal Frequency Division Multiple Access (OFDMA) transmission scheme acting as a stepping stone for providing higher data rates with future improvements, which will enter the standardization process later. Initially, LTE networks will offer the same speed order as HSPA+, its predecessor. This repeats the case we have seen at the time of 3G UMTS introduction, which was also rolled out with data rates comparable with previous second generation enhancements of 384 kb/s EDGE. The new OFDMA air interface of LTE will provide the foundation for developing enhanced standards, what is currently referred to as LTE-Advanced. LTE-Advanced technologies therefore can be seen as a further stepping stone for cellular evolution along the data rate increases in the projections of Moore’s Law and as required by the market.

Considering the technology and market forces in 10 years, we must be able to address speeds of 10 Gb/s or more. Current systems do not scale to this requirement. Using OFDMA for these data rates, the analog/digital conversion with 10-bit resolution alone would represent a power consumption challenge that cannot be resolved with current technology projections. Hence, the power consumption determines that a new physical layer approach needs to be found for 5G cellular communications.

Driving Technology by Human Needs: Sensing, Monitoring, Collecting

One of human nature’s greatest desires is to hunt and collect information in order to learn more about the status of our environment. This natural trait has found its way into a plurality of smartphone apps, which are available for tracing and tracking not only weather information, but the status of many different items and things. Obviously, this is not even a start when taking this to a more extreme view. For example, given that the status of every individual flower plant could be monitored and classified according to the kind of plant, we could ensure proper light and moisture for optimum growth and health. What is true in terms of desire for monitoring of flower plants is also true for many other applications. Examples are environmental status, traffic status, vehicle status, heating, ventilation, and air conditioning (HVAC) and its environmental status, and health monitoring. Clearly a large amount of monitoring can be achieved only if monitoring terminals can be designed to operate very simply, and for an extremely long duration.

An example of simple handling could be that such a sensing device would be activated by connecting the battery. The strip has a number or barcode which must be entered into a smartphone, initiating the download of an app and connecting the app with the device. By repeating this procedure for any additional device of the same kind, it would be added automatically to the same app. This is true for large deployment of M2M, at very low data rates, but with very large numbers of devices. This vision can come true if the M2M devices are connected via cellular networks and not only via WLAN or ZigBee. The reasons are the availability of cellular coverage, combined with the simplicity of handling as users do not have to set up and connect to a ZigBee or WLAN hotspot. This makes it simple enough for every person to be able to buy M2M sensors and participate in the collection of data.

The vision is that of “Things 2.0.” In Web 2.0, every person participates in social networks by disclosing personal information as well as their current status, documented in text, pictures, and relationships to others. In the world of Things 2.0, every M2M device participates by communicating its type and its status, and this information needs to be grouped in communities for aggregating and providing information. These communities could include hobby devices of the same class, for example, skis. If every ski communicated the current status of the run, you could instantaneously select the type of slope you are looking for considering elements such as ice for speed, moguls, powder, etc., and create “Skis 2.0.” The same would be true for other activities, Surfing 2.0 for instance. The wave coming could be monitored and classified, not missing your chance for catching a great ride. Other M2M communities could be Cars 2.0, Homes 2.0 and Environment 2.0. Clearly, this vision could lead to a number of connected devices, which could easily cross the number 1 trillion.

Technology Challenges: Sensing, Monitoring, Collecting

When addressing the need for M2M sensing, it must be translated into technical requirements specification, which serves as a design goal. As the M2M sensing requirements differ widely from one application scenario to another, a sensible specification must be developed that serves the needs of a dominating majority.

The best solution would be a device that could be activated, runs for 10 years on an AAA battery, and transmits, for example, 25 bytes of information in a duty cycle of every 100 seconds. This duty cycle could be an average, as it is moderated according to the instantaneous need. The skis from our previous example could be sitting in storage and would only be updated once a day, whereas on the slope a 1-second duty cycle might make sense. However, the question that needs to be answered is whether the power budget is reasonable. Taking the communication need of 25 B per 100 s, the resulting average data rate equals 2 b/s. Assuming a 2 MHz channel with a net capacity of 0.1 b/s/Hz (roughly GSM, and 5 percent of LTE) this results in an average of 100,000 M2M devices per cell. If every device would be billed by the operator with $1 a year this would result in $100k per cell addressable revenue.

Data rate 25 B/100s →2 b/s average 2 MHz channel available at 2b/s/Hz →100,000 devices per cell $1 revenue per device per year →$100,000 revenue per cell

Assuming 16-QAM modulation with net 2 bit/symbol (after error correction coding) results in 4 Mb/s within the 2 MHz channel. Each

25 B (200 bit) packet would then require a 0.5 ms duration; adding preambles and ramp-up/down of the transceiver, roughly 1 ms duration per packet can be assumed. For this case, 25 B for uplink as well as for downlink packets results in 2 ms transceiver uptime per duty cycle, or 2×10-6.

16-QAM modulation →0.5 ms packet duration →average on-time activity of 2×10-6

Considering an AAA battery with 1000 mAh @ 1 V and 10 years of M2M battery life operations, this leads to 10 µW average power available. Dividing this average by 2×10-6 results in a power budget of 5 W during transmit and receive, allowing for 1 W transmit power. Even when taking a standby power of 1 µW into consideration, the numbers only change marginally.

Average on-time activity of 2×10-6 → AAA battery allows for 10-year operation.

All the calculations above further improve when taking energy scavenging into consideration. Scavenging energy is a term for generating energy through exploiting the low power from the environment, e.g., through solar power, or exploiting vibration power through piezo elements. Therefore, the good news is that this kind of M2M Things 2.0 vision is technically possible. However, this is not true with current cellular systems as their protocols require too much communications overhead for synchronization and channel allocation that 10 years of operations off an AAA battery is far more than one order of magnitude away. Hence, a new 5G standard is needed.

Driving Technology by Human Needs: Tactile Real-Time Constraints

The wireless roadmap clearly shows how technology continues to drive data rates. However, other forms of human interaction besides Internet browsing and multimedia distribution can be analyzed to understand basic needs, which will be serviced by drastically new innovations over the coming decades. For this, real-time experience can be analyzed in more detail. Obviously, real time is experienced whenever the communication response time is fast enough when compared with the time constants of the application system environment. There are four types of physiological real-time constants to consider: muscular, audio, visual, and tactile.

As humans we have the ability to react to sudden changes of situations with our muscles –for example, by hitting the brakes in a car due to an unforeseen incident, or by touching a hot platter on a stove. If unprepared, the sensing to muscular reaction time is in the range of 0.5 to 1 seconds. This clearly sets boundaries for technology specification in comparable situations. An example is Web browsing. The page buildup after clicking on a link has to be in the same order of time. Henceforth, real-time browsing interaction is experienced if new Web pages can be built up after clicking on a link within 0.5 seconds. A shorter latency, that is, a faster reaction time of the Web is not necessary for creating a real-time experience. This reaction time has been serviced by initial 802.11b and 3G cellular systems.

The next shorter real-time latency constant is experienced when analyzing the hearing system. With humans, it is known that real-time interaction is experienced in conversations if the corresponding party receives the audio signal within 70 to 100 ms. This requirement means when standing more than 30 m (100 ft) apart, due to the speed of sound, real-time discussions cannot be carried out. This fact has led the International Telecommunications Union (ITU) to set this as a minimum latency requirement for telephony. Since the speed of light is 1 million times faster than sound, many applications have been designed that adhere to this restriction. For engineering system specifications, the impact has been that speech delays on telephone lines have to be in that order of magnitude. Also, lip synchronization between the video stream and the sound track needs to be within the same time lag, otherwise the sound seems disconnected to the moving image. The 4th generation cellular standard LTE meets this requirement, as well as modern 3rd generation systems, making Internet video conferencing (e.g., Skype) viable over cellular.

Our eyes, the visual sensing system of human beings, have a resolution slower than 100 Hz, which is why modern TV sets have a picture refresh rate in the order of 100 Hz. This allows for seamless video experience, translating into 10 ms latency requirement.

Figure 4 Physiological real-time constants.

The toughest real-time latency is given by the tactile sensing of human bodies. It has been noted that we can distinguish latencies in the order of 1 ms accuracy. Examples are the resolution of our tactile sensing when we move our fingers back and forth over a table with a scratch. By our high sensing resolution, we can experience the scratch in the same position. Also, tactile-visual control is in the same order of magnitude, when moving an object on a touch screen for example. If we move our hand at a rate of 1 m/s, at 1 ms latency the image follows the finger with a displacement of approximately 1 mm. A latency of 100 ms would create a 100 mm (4") displacement. A similar experience can be made by moving a mouse and tracking the cursor on the display. Since we see a differential signal over a static background, a screen update resolution finer than 10 ms is necessary. More extreme situations where the 1 ms latency requirement can be experienced are when moving a 3D object with a joy stick or in a virtual reality environment. A real-time cyber physical experience can only be given if the electronic system adheres to this extreme latency time constraint. This is far shorter than current wireless cellular systems allow for, missing the target by nearly two orders of magnitude (see Figure 4).

Figure 5 The impact of breaking down the 1 ms roundtrip delay.

Technology Challenges: Real Time

When a wireless engineer deals with latency restrictions, they need to consider the speed of light. In 1 ms, light travels 300 km. However to consider a 1 ms roundtrip latency constraint, see the possible distribution over the individual components depicted in Figure 5. It is a very rough distribution of latency within the chain of communication from the sensor through the operating system, the wireless/cellular protocol stack, the physical layer of terminal and base station including the latency of speed of light, the base station’s protocol stack, the trunk line to the compute server, the operating system of the server, the network within the server to the processor, the computation, and back through the equivalent chain to the actuator.

Each and every element of this communications and control chain must be optimized for latency. Clearly, as the time budget on the physical layer is 100 µs maximum, LTE with OFDMA symbol duration of 70 µs is not going to be the solution. A completely new and revamped 5G cellular standard needs to be designed.

Conclusion

At Technical University of Dresden (TU Dresden), research on the new technologies of 5G wireless systems is being conducted using National Instruments (NI) RF and communications tools, including NI LabVIEW system design software and NI PXI products. The TU Dresden 5G wireless lab is one of the first in the world that is comprehensively addressing these challenges. Research results will be used to influence and drive the global standards for the next phase of wireless communications.

Figure 6 The wireless network moving from communications to monitoring to control.

The new challenges of 5G cellular communications can be derived from a user-centric perspective, requiring 10 Gb/s data rates to address network traffic constraints of today’s 4G systems, 10 years of operations for M2M sensing devices, as well as 1 ms real-time latency (see Figure 6). As all three dimensions may not need to be addressed simultaneously for any class of service, it can be assumed that it is feasible to design a new 5G system that can meet these differing requirements, and that it will clearly differ from 4G LTE.

- Data rates of 10 Gb/s will enable immersive virtual reality at levels not foreseen.

- Communicating sensors embedded in our environment will enable a new world of Things 2.0. We will move from cellular/wireless communications to a new level of wireless monitoring.

- And a roundtrip latency of 1 ms will move the world from enjoying today’s wireless communication systems into the new world of wireless control systems. It will dramatically change our life, impacting all aspects of application areas such as health, safety, traffic, education, sports, games and energy.

The result will be that we will see revolutionary steps from today’s wireless communication to future monitoring and to future control networks. The wireless opportunities lying ahead are larger than anyone can foresee. What we experience today is only the very first glimpse. Obviously, with challenges as pointed out here, 5G cellular communication will be the base for our future, impacting societies in ways which cannot be foreseen yet.

Gerhard P. Fettweis earned his Ph.D. from RWTH Aachen in 1990. Thereafter he was at IBM Research and TCSI Inc., California. Since 1994 he is Vodafone Chair Professor at TU Dresden, Germany, with main research interest on wireless transmission and chip design. He is an IEEE Fellow and an honorary doctorate of TU Tampere.

For more information about the NI platform that enabled this research, go to www.mwjournal.com/tudresdenNI.