Air traffic control (ATC) radar, military air traffic surveillance (ATS) radars and meteorological radars operate in the S-Band frequency range. The excellent meteorological and propagation characteristics make the use of this frequency band beneficial for radar operation – but not just for radar. These frequencies are also of special interest to 4G wireless communications systems such as UMTS long-term evolution (LTE). The test and measurement of co-existing S-Band radar systems and LTE networks is absolutely essential, as performance degradation of mobile devices and networks or even malfunction of ATC radars has been proven.

The Third Generation Partnership Project (3GPP) and standardization body behind LTE started its work on this new technology back in 2004. Four years later, in December 2008, the work on the initial version of the standard was finished and published as part of 3GPP Release 8 for all relevant technical specifications. As of February 2014, 263 LTE networks are on air in 97 countries. The majority of Time-Division (TD) LTE frequency bands are in the S-Band frequency range where ATC, ATS and meteorological radars operate. A dedicated co-existence study for TD-LTE and S-Band radars is therefore recommended.

This article describes potential issues concerning S-Band radar systems and LTE networks from base stations, mobile devices and radar operating in close proximity to one another. It addresses frequency allocation of these systems, explains the performance degradation or malfunction that can be expected and describes measurement solutions for interference testing of radar and LTE networks. Measurements completed at airports demonstrate possible interference and significant performance degradation of both radar systems and LTE networks.

SPECTRUM ALLOCATION

The S-Band has been defined by IEEE as all frequencies between 2 and 4 GHz. Besides aviation and weather forecast, several other maritime radars worldwide also operate in this frequency band. LTE is supposed to operate in two different modes, frequency division duplex (FDD) and time division duplex (TDD). Both duplex modes use different frequency bands worldwide. The latest version of the LTE standard specifies a total of 29 frequency bands for FDD and 12 frequency bands for TDD.

Table 1 lists the LTE FDD frequency bands, whereas the ones in the S-Band frequency range are marked in light blue. The frequency bands that are fairly close to any operational S-Band radar system are highlighted in dark blue. One of the highlighted bands in Table 1 is Band 7, which is used throughout Europe. Due to the rapid deployment of base stations and the addition of small cells to increase system capacity, for example at airport terminals; the co-existence of LTE base stations and ATC radar is of major interest.

Another example in terms of LTE and radar co-existence is the anticipated commercialization of the 3.5 GHz spectrum in the U.S. by the Federal Communications Commission (FCC). The FCC hosted a technical workshop this past January that explored the possibilities of using 100 MHz of spectrum in the 3550 to 3650 MHz band for small cell deployment based on shared spectrum access.1 Today, this spectrum is owned by the Department of Defense (DoD) and is being used, for example, by maritime radar.2

The 2.5 to 2.69 GHz band is allocated by terrestrial mobile services organized in two 70 MHz blocks of paired spectrum (FDD) and one 50 MHz block of unpaired spectrum (TDD), see Figure 1. With FDD, the uplink communications frequencies that could be used by the mobile device to transmit to the base station are allocated from 2500 to 2570 MHz. The 2570 to 2620 MHz block is reserved for TDD, and the 2620 to 2690 MHz block is intended for the downlink, where the base station would transmit to the mobile device. 3GPP adopted these frequencies as band 7 (FDD) and as band 38 (TDD). The 2.7 to 2.9 GHz frequency band is primarily allocated to aeronautical radio navigation, i.e., ground-based fixed and transportable radar platforms for meteorological purposes and aeronautical radio navigation services, shown in Figure 1.

Figure 1 International Telecommunication Union radio regulations in the 2.5 to 3.1 GHz band.3

Carrier frequencies of the radars mentioned are assumed to be uniformly distributed throughout the S-Band.4 As depicted in Figure 1, the two frequency bands for mobile communications and aeronautical radio navigation are located very close to each other. As an example, some ATC radar systems operate at 2.7 to 2.9 GHz; others, such as the AN/SPY-1 radar operated by the U.S. Navy, operate at a frequency of 3.5 GHz. Most of these types of radar apply pulse and pulse compression waveforms.

After pulse transmission, the radar switches from transmitter to receiver and receives the radar echo pulses from targets inside the observation area. Receivers use low noise front ends because echo signal power is extremely low. This high sensitivity makes receivers susceptible to interference signals. LTE networks using nearby frequencies can cause these interferences and may significantly degrade the radar performance.

CO-EXISTENCE OF LTE AND S-BAND RADAR

Disturbance of LTE networks may occur through S-Band radar, such as degradation of performance due to lower throughput, indicated by an increasing block error rate (BLER). On the first view, this is not a major drawback, but spectral efficiency, power reduction and cost are of great importance for any mobile network operator.

3GPP specifications find solutions, e.g., dynamic frequency selection or transmit power control, in order not to disturb other signals. However, radar parameters such as transceiver bandwidth, spurious emissions, transmit power, transmit antenna pattern, polarization and waveform may limit the performance of mobile services, because these systems obey different regulations.

ITU Recommendation M.1464-14 mentions ATC radar systems operating in mono-frequent or frequency diversity. The RF emission bandwidth of these radars ranges from several kHz to 10 MHz while transmitting power of up to 91.5 dBm. Since the mobile network has standardized filtering in line with 3GPP, disturbance must not occur. Yet measurement results show that 4G user equipment and base stations are influenced by radar signals and should be tested to ensure proper functionality, efficient power and spectrum usage.

Figure 2 Radar amplifier chain in the frequency domain and out-of-band and in-band interference.

On the other hand, the presence of LTE signals and less selective filters in the radar receiver can cause significant interference or even damage to the radar system. This may be indicated by false target detection or by the high power state of the receiver protector. The latter can occur when LTE signals and spurious emissions are very strong and received by the radar. In the case of weaker signals or signals outside the nominal receiver bandwidth, the radar could go into compression and produce nonlinear responses or react by raising the constant false alarm rate (CFAR) threshold (see Figure 2). Targets that are present can thereby be lost in consecutive measurements and targets with low power echoes cannot be detected.

Figure 3 Vehicle with measuring equipment recording I/Q data at a German airport.

The performance degradation depends strongly on the type of signal disturbing the radar. Continuous-wave or noise-like modulation signals with constant power disturb azimuth sectors of the radar in which the interferer is located. Pulsed interference strongly depends on synchrony with the radar and the design of the receiver, signal processing and mode of operation, e.g., frequency agile radar systems may be less influenced than non-frequency agile ones.

Interference entering the receiver chain and finally the detector of the radar is depicted in Figure 2. While in-band interference will raise the noise floor, out-of-band interference may overload the amplifiers and decrease the signal power. Either way, the signal-to-noise ratio (SNR) of a target echo signal at the detector is reduced, which is why the probability of detection is reduced.

According to 3GPP Technical Specification (TS) 36.101 and TS 36.104, LTE base stations are allowed to transmit a maximum of 46 dBm with additional antenna gain of approximately 15 dBi. Antenna height may be up to 30 m above ground. To estimate the disturbance of radar caused by mobile services, technical radar parameters such as radar receiver sensitivity, noise figure, recovery time, bandwidth, antenna pattern and polarization have to be known.

The large variety of radar systems, their inherent design and technical parameters cause different and nearly unpredictable reactions to LTE signals in their environment. Additionally, antenna steering direction and output power of base stations can increase radar interference. Radar, as an extremely security-relevant system, therefore has to be tested in the presence of 4G networks.

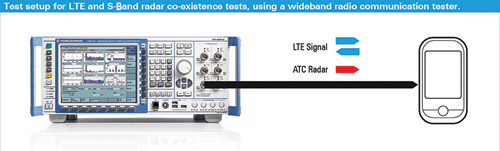

Figure 4 Test setup for LTE and S-Band radar co-existence tests, using a wideband radio communication tester.

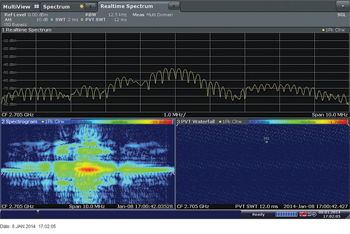

Figure 5 Captured S-Band radar signal (10 MHz) analyzed with R&S FSW signal and spectrum analyzer in real-time mode.

TEST SOLUTIONS AND MITIGATION

Test and measurement solutions have been developed to apply different synthetic as well as recorded signals to both LTE networks and S-Band radar systems. These test solutions allow verification of proper functionality and, in the case of interference or even malfunction, development of mitigation techniques.

Performance and Interference Testing of LTE Terminals

For chipset and wireless device testing, a wideband radio communication tester is widely used. Engineers use it for protocol, signaling and mobility tests as well as for RF parametric tests of transmitter and receiver performance of a mobile terminal. 3GPP has defined multiple test cases for all three test areas: protocol/signaling, mobility and radio resource management (RRM), as well as RF conformance.

This ensures minimum compliance with the current 3GPP standard. However, the majority of receiver and performance tests for LTE only assume the presence of another LTE signal or 3G signals, such as WCDMA (UMTS). There are no tests defined by 3GPP for the presence of an S-Band radar signal in adjacent frequencies to the received signal from an LTE base station.

In order to test real-world conditions, it would be beneficial to record an S-Band radar signal and play it back on an adjacent frequency while performing a throughput test or receive sensitivity test on an LTE-capable terminal that is, for example, operational in Band 7 (detailed description in reference 8). The ATC radar signal from an airport could be recorded. Therefore a universal network scanner would be tuned to the desired S-Band radar frequency to capture the RF signal, perform the downconversion and thus convert the RF signal to I/Q data. Figure 3 shows a picture taken at the airport recording an ATC radar signal.

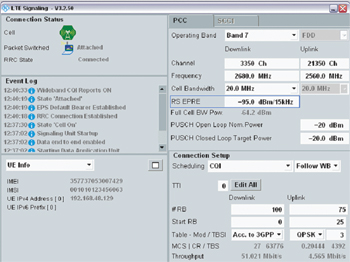

Figure 6 LTE signaling configuring for modified reference sensitivity level test (BLER measurement).

The recorded I/Q data can now be used in the lab and played back as an arbitrary waveform (ARB) using the embedded signal generator functionality in the wideband communication tester. At the same time, the wideband communication tester acts as a network emulator, simulating an LTE cell at e.g. frequency Band 7, where the device under test (DUT) registers in (see Figure 4).

To carry out a co-existence test, the recorded radar signal can now be applied during a receive sensitivity level test of the DUT, while measuring the BLER for a given LTE signal. To verify the above assumptions of LTE and S-Band radar interfering with each other, several measurements were carried out. The recorded radar signal that was used for these tests originated from an operational ATC radar at a distance of approximately 1.5 km from a major German airport.

As described, the I/Q data is played back using ARB functionality embedded in the wideband radio communication tester at a carrier frequency of 2.69 GHz and in a second test at 2.693 GHz. The measured radar transmitted consecutive pulses at different frequencies using a total bandwidth of 10 MHz. As the radar rotates during operation, radar signals arrive at the receiver in an equidistant time of 1.1 ms (see Figure 5). In the measurement data, a maximum power level of 0 dBm was detected by the spectrum analyzer, and the total SNR varied between 10 to 70 dB depending on the measurement position.

To analyze receiver performance of an LTE-capable terminal in the presence of an S-Band radar signal, the receive sensitivity level test estimated with help of a BLER measurement and described in section 7.3 in 3GPP’s TS 36.521-1 for UE RF conformance was adopted and slightly modified. The test described in this section requires the device to transmit at maximum output power, which is defined for LTE for all commercial frequency bands with +23 dBm.

Figure 7 Reference sensitivity level test (BLER measurement) without S-Band radar signal present.

In addition, the orthogonal channel noise generator (OCNG) on the downlink has to be enabled to emulate other users being active within this bandwidth. In general, the receive sensitivity test is designed to verify QPSK modulation only for a full resource block (RB) allocation in the downlink, but no MIMO. Dependent on the frequency band and bandwidth being tested, the reference sensitivity level varies.

For Band 7 and 20 MHz, it corresponds to a reference signal power level of -91.3 dBm full cell bandwidth power. In the uplink, an allocation of 75 RB is used with an offset of 25 RB and is as close as possible to the downlink. The following sensitivity test is based on a BLER measurement. The wideband radio communication tester counts the reported acknowledged (ACK) and non-acknowledged (NACK) data packets, from where an average throughput is calculated.

For the test, specific test signals (reference measurement channels (RMC) for the downlink and uplink), are standardized. These test signals allow a maximum throughput based on the specified signal configuration in terms of bandwidth allocated, modulation scheme being used and transport block size (TBS). The standard test is only specified for QPSK modulation, where the maximum achievable throughput is relatively low, compared with any higher-order modulation scheme. Higher modulation schemes would require a better SNR.

Any interference would impact the achievable throughput using, for example, 16QAM and/or 64QAM – which would result in a much higher BLER and a much lower throughput. To verify this assumption, the standardized test in 3GPP TS 36.521-1, section 7.3, was changed in such a way that the scheduling type was changed from RMC to channel quality indicator (CQI) and, in particular, follow wideband CQI mode (see Figure 6). This ensures more real-world conditions during the tests.

Figure 8 Modified reference sensitivity level measurement (BLER) while S-Band radar signal is present.

With this test method the LTE device measures the channel quality on the downlink signal using the embedded reference signals. The measured signal receive quality is translated to a CQI value that is reported back to the network. The scheduler in the LTE base station can now use this feedback to basically adopt the resource allocation, modulation and coding scheme being used based on actual channel conditions as seen by the device. This results in better performance, namely average throughput.

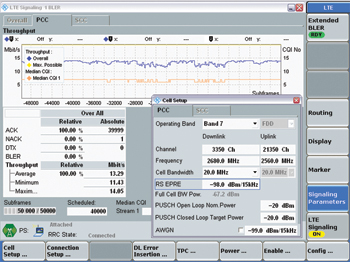

In terms of any interference present, the device would measure a lower received signal quality, translating into a lower CQI value being reported, which would result in the usage of a lower modulation and coding scheme by the base station. Figure 7 shows the results for the first test without the radar signal being present. There was 100 percent acknowledged packets with an average throughput of 13.29 Mbit/s achieved.

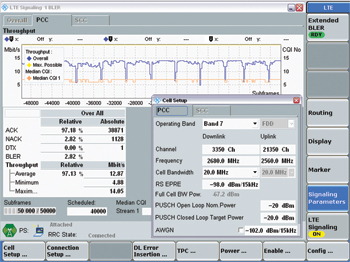

In comparison, Figure 8 shows the result for the reference sensitivity level test with the radar signal being present at a carrier frequency of 2690 MHz at an output power of -7.00 dBm, comparable to the power levels measured at an airport. All other test parameters are the same. The NACK rate has increased to almost 3 percent and there is a massive drop in data throughput (blue curve, upper graph) in the presence of a pulse radar signal. The throughput drops when the radar signal points toward the mobile terminal.

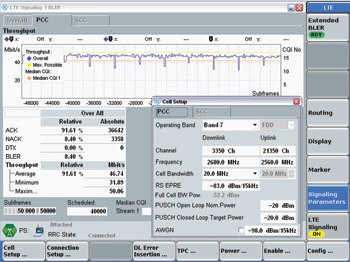

For the second measurement, the power level of the base station was increased to -83 dBm/15 kHz. As depicted in Figure 9, the block error rate increased even for a higher output power for the LTE downlink signal. Additional tests showed that the throughput and CQI decreased even when the radar was operating at a frequency of 2700 MHz, depending on the DUTs.

Figure 9 BLER measurement with S-Band radar signal present.

The results presented in Figures 8 and 9 show that there are co-existence issues when an LTE-capable terminal has an active data connection running and comes close to an S-Band radar signal. In the real world, the receive power levels of the serving LTE base station might even be lower than the ones used in the test, in which case we would see a higher BLER resulting in lower throughput or even connection loss and thus impacting mobile services.

Radar Interference Test

When a radar system is being designed or a 2.6 GHz base station is being set up, several aspects have to be considered to ensure efficient functionality, robustness and co-existence of both systems. For test and measurement, an interference test system for S-Band radar systems to counter interference from LTE signals (see Figure 10) was developed.5

The system is able to generate realistic 4G scenarios in the frequency range of 2.496 to 2.69 GHz, including the generation of multiple base and mobile stations. The test system can be set up at a distance of 100 to 300 m in front of a radar system that is in normal operating mode. The radar operator is then able to immediately test and measure the ATC radar in the presence of LTE signals.

Measurements performed using the radar test system have shown that out-of-band and in-band interference mechanisms can become critical and cause ATC radar to become blind in certain azimuth sectors and under certain conditions, e.g., when broadcasting an LTE6 or WiMAX7 signal towards the radar. This reduces the probability of detection and causes the radar to lose targets. Reference 6 addresses “challenging case” and “typical case” scenarios of 4G networks at airports and describes mitigation techniques.

Figure 10 Radar interference test system R&S TS6650.

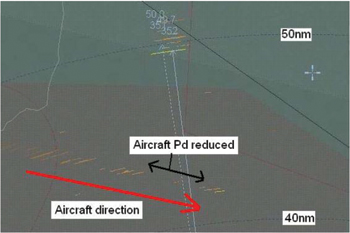

Dominant interference occurs due to both mobile and base stations, which cause the noise floor to rise. Reference 7 describes tests in which the radar was disturbed using continuous-wave and pulsed signals. The study shows degradation in the probability of detection depending on the applied signal. Figure 11 shows where the probability of detection (Pd) is reduced due to the presence of a 4G signal; the aircraft even disappeared from the radar screen.7 Due to these results, co-existence of radar and 4G networks should be tested.

Mitigation Techniques

Different approaches can mitigate disturbances on radar and 4G base stations. One approach is to reduce transmit power at the base station and radar. However, this would reduce the maximum range of the radar and coverage of the base station. Another approach would be to increase frequency separation or distance between the two services, but frequency selection may be impossible due to technical restrictions. There are also less expensive techniques, such as not pointing mobile service base station antennas towards S-Band radar. Basic approaches involve improving receiver selectivity, filtering transmitter signals, and reducing unwanted spurious emissions on both sides. These steps would allow co-existence. In order to validate mitigation techniques, test and measurement is necessary.

CONCLUSION

Up-and-coming 4G networks such as LTE and WiMAX™ operate in the 2.6 GHz frequency band, as well as ATC S-Band radar systems. To ensure proper functionality of the radar and efficient 4G networks, co-existence has to be proven. The tests and measurements explained in this article were conducted at airports to test radar as well as LTE mobile terminals in the presence of disturbing signals and found that performance degradation can occur on both sides. This causes reduced probability of detection of the radar and reduced throughput of 4G mobile networks.

References

Figure 11 4G signal at 2685 MHz.7

- 3.5 GHz Spectrum Access Workshop; retrieved from www.fcc.gov/events/35-ghz-spectrum-access-system-workshop; April 30, 2014.

- FCC sets workshop date for 3.5 GHz band spectrum use; retrieved from www.rcrwireless.com/article/20131119/fcc_wireless_regulations/fcc-sets-workshop-date-for-3-5-ghz-band-spectrum-use/; November 22, 2013.

- International Telecommunication Union (ITU), Radio Regulations – Articles S5, S21 and S22 Appendix S4, WRC-95 Resolutions, WRC-95 Recommendations, Geneva 1996, ISBN 92-61-05171-5.

- ITU Recommendation M.1464-1, Characteristics of Radiolocation Radars, and Characteristics and Protection Criteria for Sharing Studies for Aeronautical Radionavigation and Meteorological Radars in the Radiodetermination Service Operating in the Frequency Band from 2700 to 2900 MHz; July 2003.

- R&S®TS6650 Interference Test System for ATC Radar Systems, retrieved from www.rohde-schwarz-ad.com/docs/radartest/TS6650_bro_en.pdf; November 22, 2013.

- realWireless, Ofcom, Final Report – Airport Deployment Study Ref MC/045, Version 1.5; July 19, 2011; retrieved from www.ofcom.org.uk/static/spectrum/Airport_Deployment_Study.pdf; November 22, 2013.

- Cobham Technical Services, Final Report – Radiated Out-of-Band Watchman Radar Testing at RAF Honington, April 2009; retrieved from http://stakeholders.ofcom.org.uk/binaries/spectrum/spectrum-awards/awards-in-preparation/757738/589_Radiated_Out-of-Band_Wa1.pdf; November 22, 2013.

- Application Note, Coexistence Test of LTE and Radar Systems, March 2014, retrieved from www.rohde-schwarz.de/appnote/1MA211; April 1, 2014.