These days, engineers face a myriad of choices when seeking a solution for signal characterization: spectrum analyzers, signal analyzers, vector signal analyzers and real-time analyzers. When assessing the alternatives, several questions may come to mind: What’s the best choice for a specific set of measurements? How effective is it to get two or more analyzer types in a single instrument? Is it more cost-effective to buy one type initially then upgrade later when needed?

Architecturally, all four types have much in common. This article begins with a look at the similarities and differences. From there it describes each type and the possible combinations of capabilities that can address a variety of requirements.

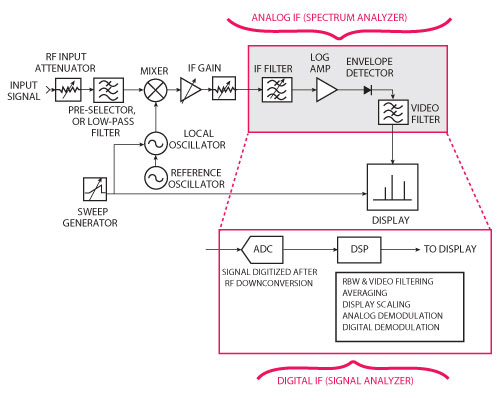

Figure 1 Traditional spectrum analyzer downconversion architecture with a digitized IF version.

They’re All “Signal Analyzers”

The common usage of the term signal analyzer has come to include several evolved versions of the traditional spectrum analyzer. Swept-tuned spectrum analyzers have been around for more than 40 years and have become a common tool for engineers who work with RF and microwave signals.

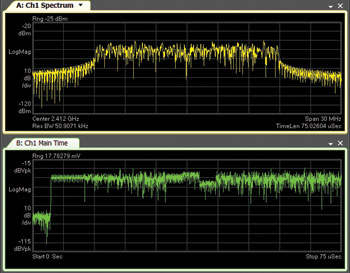

Figure 2 Operating in fixed-tuned mode, a signal analyzer can display magnitude vs. frequency (upper trace) and magnitude vs. time (lower trace).

Figure 1 shows a simplified block diagram of a traditional swept-tuned analyzer — and this provides a foundation for understanding the other types of analyzers. The architecture uses a superheterodyne receiver in which a swept frequency oscillator and broadband mixer downconvert the incoming RF or microwave signal to an intermediate frequency (IF). The resulting signal can be more easily filtered and its magnitude directly measured using an analog envelope detector.

The lower portion of Figure 1 shows a recent variation: many of today’s signal analyzers combine a superheterodyne architecture with an analog-to-digital converter (ADC) and digital signal processing (DSP) to implement a digital IF section. In this approach, DSP replaces — and improves on — troublesome analog functions such as bandwidth filtering, log scaling and video averaging. This digital approach also provides the opportunity for a seemingly infinite variety of new functionality.

Swept Spectrum Analysis, Enhanced

A signal analyzer with a digital IF allows either swept-tuned or fixed-tuned measurements. The swept-tuned mode is the traditional approach: the analyzer’s downconverting local oscillator (LO) is swept across a range of frequencies. Fixed-tuned mode is similar to the zero-span mode found on traditional spectrum analyzers. In fixed-tuned mode, the LO frequency remains stationary and a band of frequencies is digitized by the ADC.

Figure 2 shows a fixed-tuned measurement of a wideband communication system: the upper trace shows spectrum or power versus frequency; the lower trace shows RF envelope or power versus time. In this example, the waveform was digitized during a 75 microsecond period and the analyzer calculated the spectrum using a fast Fourier transform (FFT). These measurements are scalar: power spectrum or RF envelope power. This equates to the functionality of a spectrum analyzer and is a starting point for the capabilities provided by several types of signal analyzers.

Figure 3 In VSA, digitized IF data can be used to perform demodulation with typical displays such as constellation diagrams, error vector magnitude (EVM) and automatic detection of channel resources.

Vector Signal Analyzers

The first major extension of signal analysis based on the sampled digital IF was the vector signal analyzer (VSA), which was introduced in the early 1990s. In a VSA, the digitizing process utilizes complex or in-phase/quadrature (I/Q) sampling, and this preserves all signal characteristics that occur within the digitized bandwidth. As a result, a VSA can provide a full range of time-, frequency- and modulation-domain analysis capabilities, including demodulation and modulation-quality assessments. Figure 3 shows an example of digital demodulation of an LTE signal. The VSA version of the digital IF architecture often adds two more features: time capture and playback, and IF magnitude triggering. These capabilities are useful in the characterization of complex signals and dynamic signal environments.

In time capture operations, the analyzer saves a gap-free stream of I/Q samples from the IF in a large memory buffer. Because the buffer is typically much larger than that needed for a single measurement, it is easy to implement a continuous playback operation during post-capture processing. Replay can be controlled in several ways: adjusting replay speed, selecting specific portions of the buffer for analysis, and more. One key feature of post-processing is the ability to change the type of analysis: any type of vector measurement or demodulation can be performed without acquiring new data. What’s more, any measurement parameter can be changed to optimize the measurement or extract new information.

VSA software, such as the Agilent 89600, can provide an enhancement to this post-processing flexibility that is especially useful when the capture buffer contains multiple signals: the ability to change the center frequency and analysis span. These “tune” and “zoom” operations are second nature to RF engineers, and in VSA post-processing they even allow digital modulation — at the same or different center frequencies — of multiple signals from a single capture.

In a VSA, memory and high-speed DSP also provide an IF magnitude trigger. ASICs or FPGAs calculate the total magnitude of the IF signal in real time (i.e., without gaps) and test it against user-specified trigger parameters. When the trigger is reached, a single measurement or time capture is automatically initiated. With acquisition memory arranged as a circular buffer, it is possible to implement both negative (pre-trigger) and positive (post-trigger) delays. This also makes it possible to provide oscilloscope-like trigger functions such as adjustable trigger polarity and holdoff.

Evolution: Vector Signal Analysis as Application Software

The first vector signal analyzers were a dedicated combination of measurement hardware and embedded software. In subsequent years, the introduction of new types of signal samplers for RF signals meant that vector sampled data was available from many different sources.

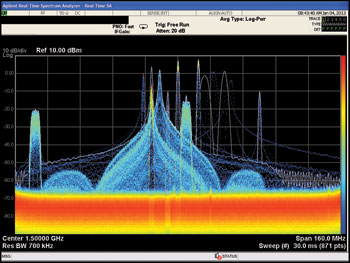

Figure 4 Advanced displays of real-time data can reveal signal behavior over time.

About 12 years ago, the availability of these new samplers led to a splitting of the analyzer hardware and the vector signal analysis functions — and now the term “signal analyzer” is increasingly used to reflect the hardware platform that downconverts and digitizes RF and microwave signals. The separate VSA application can also be used on signals acquired with digital oscilloscopes, logic analyzers and modular digitizers, and on signals created with math or simulation software.

Real-Time Analyzers: Fast, Gap-Free Processing

With sufficient processing power, typically from a combination of ASICs and FPGAs, it is now possible to continuously compute basic power spectra from a digitized data stream. Although this gap-free analysis is scalar rather than vector or demodulation, it allows the analyzer to keep up with highly dynamic spectrum behavior and is the foundation of the category called real-time analyzers.

Real-time analyzers are especially useful for understanding dynamic spectral environments and agile signals, and for the detection of unexpected and infrequent signal behavior. There is one caveat: a real-time analyzer produces a tremendous amount of spectral data in a short period. The solutions are advanced, data-dense displays and frequency-mask triggering (FMT).

Advanced Displays for More and Faster Data

Whether generated from real-time spectral calculations or gap-free time captures, the calculation of thousands of measurements per second requires intuitive representation of rapidly changing data. Here’s why: The human eye can detect about 30 images per second; in contrast, a typical real-time analyzer may produce almost 300,000 spectra per second. Thus, in a default configuration, each display must represent about 10,000 spectra in a meaningful way.

For many years, similar challenges have been met with displays such as spectrograms and waterfalls. Recently, various frequency-of-occurrence displays — density or cumulative history — have been introduced with descriptions such as digital phosphor or digital persistence. Figure 4 shows a density (or histogram) display of a wideband environment that contains several agile signals containing pulses and digital modulation. Color is used to indicate how often specific signal values (e.g., a specific amplitude/frequency point) occur over the course of millions of spectrum measurements.

Frequency-Mask Triggering

Another way to make efficient use of rapid, gap-free spectra is to evaluate them individually against a frequency mask that uses upper limits, lower limits or both. By combining limit testing with logic functions, conditional triggers can be defined that initiate measurements when, for example, a signal exhibits some combination of entering, leaving or re-entering the mask.

In a real-time analyzer, FMT typically initiates one or more spectrum measurements. When a real-time analyzer is combined with VSA software, the trigger can initiate any number of measurements: spectrum, demodulation, time capture and more. If FMT is combined with time capture and pre-trigger delay, it is possible to detect specific spectral behavior such as switching anomalies and then capture the entire event for multiple types of post-processing analysis.

Choosing the Best Combination

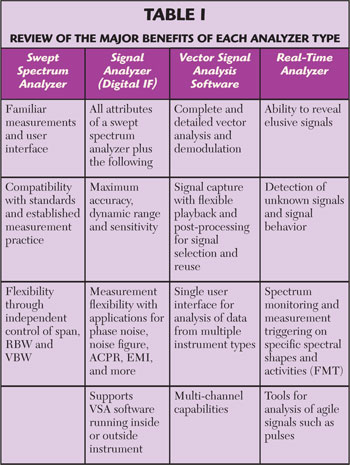

The preceding passages have described the architectures and general measurement capabilities of the principal types of signal analyzers. To make it easier to choose one or more to meet a specific set of needs, Table 1 summarizes the major attributes of each type.

Whichever type of analyzer is most appropriate, the primary purpose is to allow engineers to follow their instincts and deductions — and make measurements that help them determine cause and effect, or optimize a signal or system. This is one of the key ideas behind the availability of real-time spectrum analyzer capabilities as an upgradeable option to new or existing signal analyzers. Further, the ability to enhance a signal analyzer with VSA software enables engineers to go even deeper with other types of real-time and post-processing analysis of highly elusive signals.